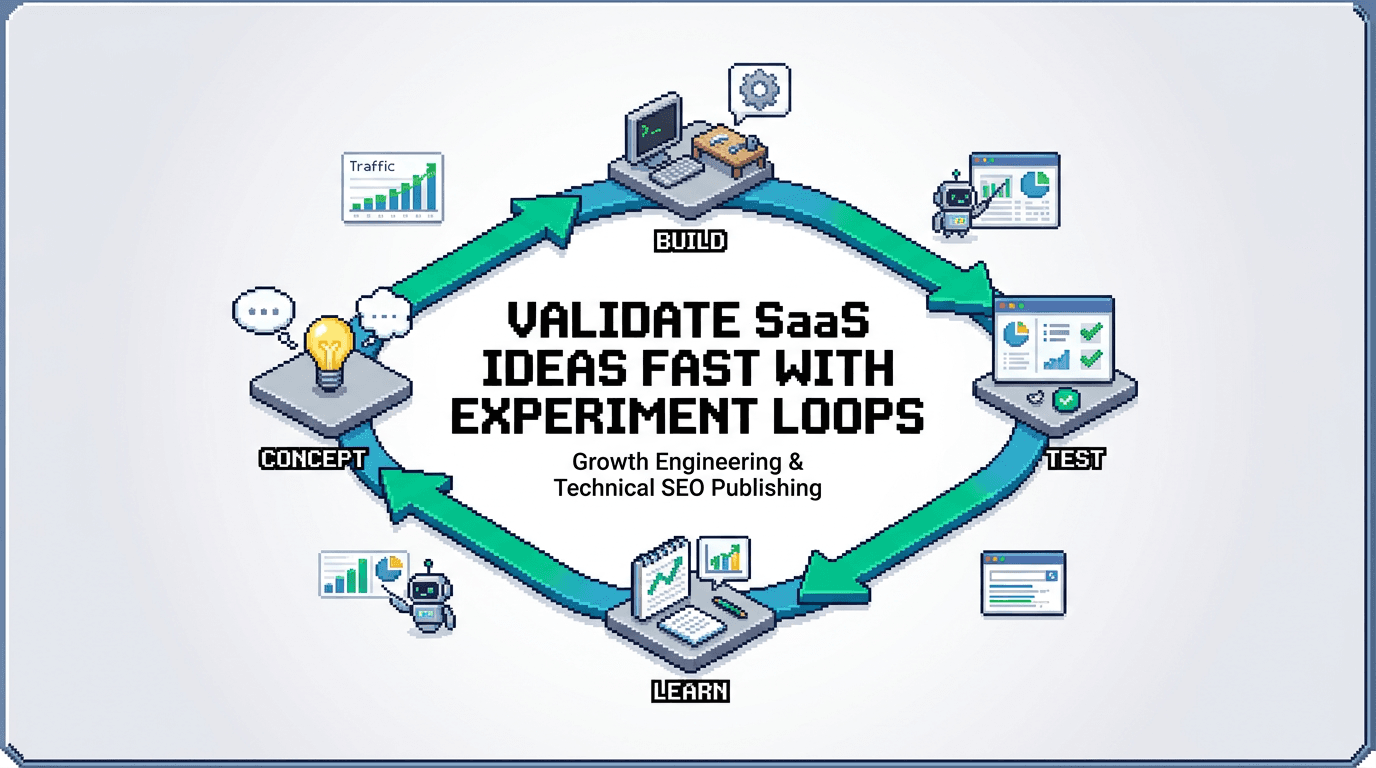

Most SaaS ideas fail from slow validation, not bad vision. Experiment loops compress the time between hypothesis, signal, and decision so you can ship with confidence.

This post shows operators how to validate SaaS ideas using experiment loops and a content first approach. You will design a search architecture, run programmatic SEO probes, wire automation workflows, and close the loop with distribution. The takeaway: treat validation as a repeatable execution playbook that produces yes or no signals in days, not months.

What Is an Experiment Loop and Why It Matters

An experiment loop is a compact system that turns ideas into measurable signals fast. It minimizes scope, maximizes learning, and repeats.

Core components

- Hypothesis: a falsifiable claim tied to a buyer job to be done

- Probe: a minimal artifact that can collect proof or disproof

- Distribution: a path to real users who can respond

- Measurement: pre defined metrics, thresholds, and timing

- Decision: keep, pivot, or kill

How it reduces risk

- Small bets limit sunk cost

- Fast feedback detects dead ends early

- Repetition compounds insight and precision

- Shared cadence aligns product, content, and growth

The Content First Playbook for Validation

Content is the lowest cost way to simulate the product promise. Use it to test demand, messaging, and distribution fit before code.

Choose a sharp problem

- Pull from support tickets, sales calls, or niche forums

- Map to a segment with budget and urgency

- Write the outcome in one line: who, job, constraint, metric

Design the minimum artifact

- Options: landing page, teardown post, calculator, checklist, dataset, or demo video

- Target build time: 1 to 3 days

- Include a clear call to action: waitlist, calendar link, or benchmark run

Search Architecture for Idea Discovery

Search reveals intent at scale. Build a simple SEO architecture to find and size problems.

Keyword to problem mapping

- Cluster keywords by job to be done, not just phrases

- Identify head, mid, and long tail intent for the same job

- Score by search volume, business fit, and difficulty

Programmatic SEO probes

- Generate templated pages for repeatable entities, metrics, or frameworks

- Use structured data to improve indexation and click through

- Gate with a small waitlist CTA to measure buying intent

Build Programmatic SEO That Respects Quality

Programmatic SEO can validate ideas fast if quality is enforced. Treat it like a schema driven publishing system.

Define the entity model

- Entities: persona, job to be done, tool, workflow, metric

- Relationships: persona uses tool to complete job and improve metric

- Each entity powers a template with unique insights

Templating and signals

- Mandatory sections: problem framing, steps, pitfalls, benchmarks

- Inject real data: quotes from calls, anonymized metrics, code snippets

- Acceptance checks: no duplicate titles, unique value score, factual references

Automation Workflows to Run Loops Weekly

Automation keeps loops on schedule and reduces manual drag.

Capture, enrich, and prioritize

- Intake: route ideas from CRM, Slack, and docs into a backlog

- Enrich: attach search data, ICP tags, and evidence links

- Prioritize: RICE or ICE with a 7 day timebox constraint

Ship, measure, and decide

- Auto generate briefs from selected ideas and assign owners

- Publish to a staging index, smoke test, then go live

- Pull metrics to a single dashboard and trigger a decision task at day 7

Distribution Loops That Reach Real Buyers

Distribution converts artifacts into signals. Design loops that compound.

Owned and earned mix

- Owned: newsletter, product blog, docs, customer community

- Earned: partner newsletters, niche forums, podcasts, and AMAs

- Repost rules: adapt headline and lead for each channel

Social and partner workflows

- Create an asset pack: title variants, hooks, images, and quotes

- Partners swap: you feature theirs; they feature yours with UTMs

- Track first click channel and assisted conversions

Metrics, Thresholds, and Kill Criteria

Decide before you ship. Pre commit to thresholds that trigger keep or kill.

Validation metrics

- Search intent: impressions, click through rate, dwell time

- Conversion: waitlist signups, demo requests, calendar commits

- Price sensitivity: responses to price anchor, pre orders, or deposit intent

Kill and pivot rules

- Kill if CTR is below 1 percent after 1k impressions with 3 headline tests

- Keep if conversion to waitlist exceeds 5 percent from organic

- Pivot if conversion is strong in one segment but weak overall

Execution Playbooks and Cadence

Standardize the loop. Fewer decisions, more shipping.

Weekly loop

- Monday: select 2 to 3 ideas and write hypotheses

- Tuesday: build artifacts and schedule distribution

- Wednesday: publish and submit to index

- Friday: push partner placements and social threads

- Next Wednesday: review metrics and decide

Roles and tools

- Owner: product operator

- Support: content engineer, data analyst, designer

- Tools: CMS with templates, GA4, GSC, Airtable, Zapier, and a BI dashboard

Example System Blueprint

A minimal system that any lean team can implement in a week.

Inputs

- ICP doc, customer calls, support tickets, and keyword clusters

Process

- Hypothesis write up

- Template selection

- Content build with data injection

- Distribution across owned and earned channels

- Measurement and decision

Outputs

- Decision log with keep and kill records

- Ranked backlog by proven demand

- Messaging that converts across channels

Comparison: Distribution Channels by Validation Strength

This table compares common channels for early validation signals.

| Channel | Speed to Signal | Targeting Precision | Setup Effort | Signal Type |

|---|---|---|---|---|

| Programmatic SEO | Medium | High | Medium | Intent and conversion |

| Newsletter Swap | Fast | Medium | Low | Clicks and waitlist |

| Niche Forum | Fast | High | Medium | Replies and demos |

| Paid Search | Very Fast | High | Medium | CTR and CPC vs. CVR |

| Social Threads | Fast | Low | Low | Engagement and traffic |

Risk Management and Failure Modes

Expect breaks. Plan rollbacks and fixes.

Common failures

- Thin templates that duplicate content

- Weak distribution that under samples demand

- Vanity metrics that do not predict revenue

Safeguards

- Unique value score for every page

- Minimum distribution quota per artifact

- Tie every metric to pipeline or retention proxies

From Signals to Product Decisions

Turn green lights into scoped builds, not epics.

Scope small wins

- Start with a workflow level outcome, not a platform

- Ship a microservice, integration, or one feature that proves value

- Keep feedback open and visible

Hand off to roadmap

- Add hypothesis, data, and decision notes to the PRD

- Preserve language that converted in content for UI copy

- Set post launch metrics that mirror the validation ones

Tooling Stack for Technical SEO and Loops

Choose tools that integrate and log decisions.

Core stack

- CMS that supports templates and structured content

- Git based repo for templates and content schemas

- Analytics stack for search, web, and funnel

Automation and QA

- Workflows for brief generation, publishing, and reporting

- Link checkers, schema validators, and content diff alerts

- Dashboards with red and green thresholds

Realistic Timelines and Targets

Focus on weekly cycles and 90 day horizons.

Weekly targets

- Ship 2 to 3 artifacts tied to distinct hypotheses

- Achieve 1k impressions or 200 qualified visits per artifact

- Log a decision for every artifact within 7 to 10 days

Ninety day outcomes

- 6 to 10 validated problems with channel fit

- 2 to 3 ready to build product slices

- Repeatable content and distribution machine

Governance and Documentation

Institutionalize the loop so it survives team changes.

Documentation

- Central repo for hypotheses, briefs, and results

- Version control for templates and acceptance checks

- Changelog of decisions with dates and owners

Governance

- Monthly review to refine thresholds and templates

- Post mortems for failed loops with action items

- Access controls and approvals for publishing

Agentic Workflows and Future Proofing

Use agentic automations to scale without losing control.

Agent roles

- Research agent aggregates intent data and clusters

- Brief agent drafts outlines with sources and gaps

- QA agent checks duplicates, facts, and schema

Guardrails

- Human in the loop on hypothesis and decision steps

- Rate limit publishing by quality gates

- Keep audit trails for all agent actions

Key Takeaways

- Run experiment loops weekly to validate with speed and rigor.

- Use programmatic SEO and search architecture for scalable probes.

- Automate intake, publishing, and reporting to cut manual work.

- Design distribution loops that reach real buyers fast.

- Decide with thresholds, then build the smallest winning slice.

Validation is a system. Ship small, learn fast, and compound wins.