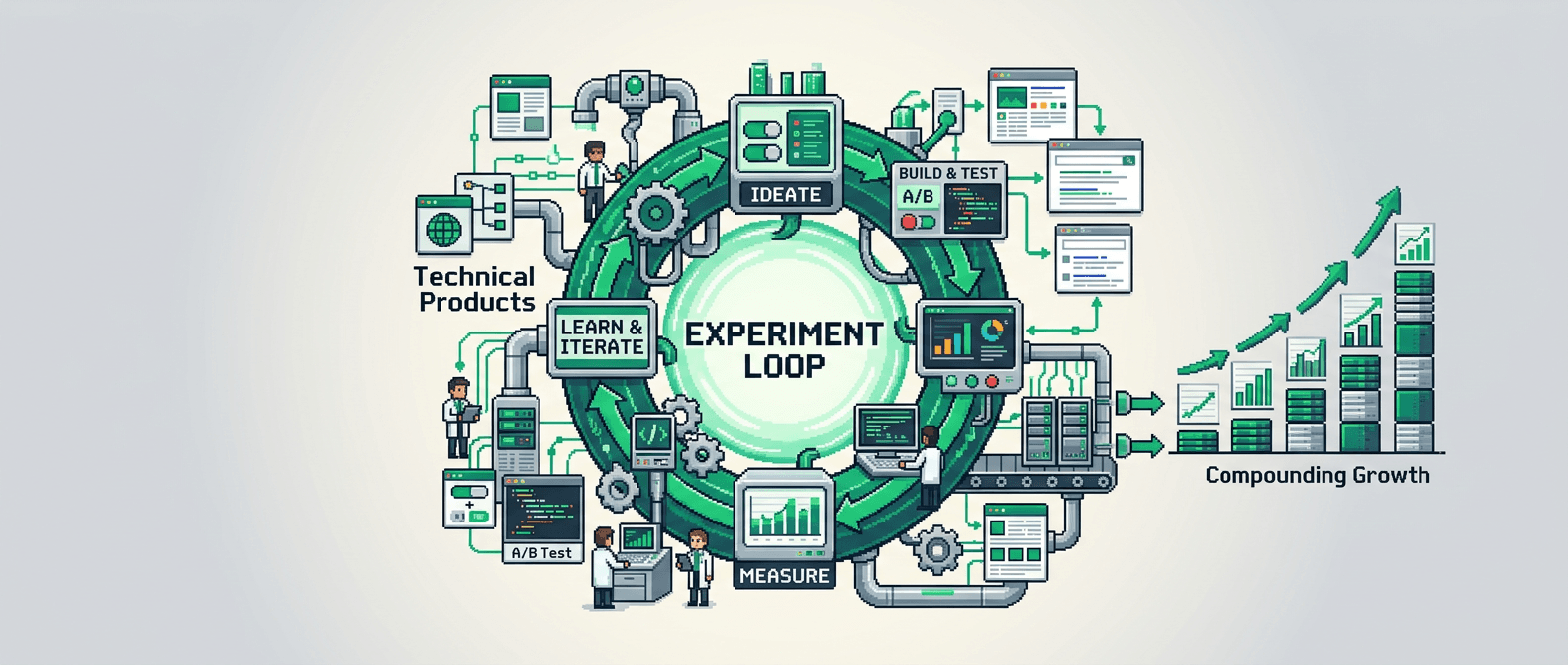

Most teams experiment by feel. High performers ship experiment loops that turn noise into compounding signal.

This post shows how to build experiment loops for technical products. It is for product operators and growth engineers who need a repeatable system. The takeaway: codify the loop, automate data capture, and schedule review cadences so each test improves the next.

What an Experiment Loop Is and Why It Wins

An experiment loop is a closed system that converts hypotheses into decisions, then feeds results into the next iteration. It reduces drift, speeds learning, and compounds gains.

Core elements of a tight loop

- Hypothesis with measurable impact and owner

- Instrumentation and baseline

- Predefined success criteria and stop rules

- Automated data collection and QA

- Decision meeting with clear next action

- Knowledge artifact that updates strategy

Signs your loop is working

- Cycle time shrinks while quality holds

- Win rate increases quarter over quarter

- Reused components rise and net-new code falls

- Fewer orphaned experiments and clearer strategy deltas

System Architecture for Experiment Loops

Treat the loop like a product surface. Define inputs, process, and outputs with owners and SLAs.

Inputs

- Ranked opportunities from a shared backlog

- Hypotheses templated with effect size estimates

- Instrumented events and dashboards

Process

- Weekly pitch and scope

- Build behind flags

- Run for a fixed sample window or until a stop condition

- QA and data sanity checks

Outputs

- Decision memo with effect sizes and uncertainty

- Playbook updates and component library changes

- Next test seeded by prior insights

Automation Workflows that Remove Manual Bottlenecks

Experiment loops break when teams hand copy data or stall on QA. Automate the boring lanes.

Data capture and sanity checks

- Auto tag experiment IDs in event schemas

- Run nightly checks for event volume, null rates, and cardinality spikes

- Alert when guardrails breach thresholds

Decision and documentation flow

- Generate draft decision memos from the analytics notebook

- Push key metrics and confidence to Slack before the review

- Auto update the experiment registry and link to PRs

Metrics, Guardrails, and Stop Rules

Define metrics up front. Stop on time, power, or risk. Avoid p-hacking by freezing analysis plans.

Primary and secondary metrics

- One primary metric tied to user or revenue value

- 2 to 3 secondary metrics for mechanism checks

- Guardrails for latency, error rate, and churn

Stop rules you can audit

- Time based: run 14 days to cover weekday effects

- Power based: stop when MDE and power targets meet

- Risk based: stop if guardrails breach tolerances

Experiment Loops in Programmatic SEO

Programmatic SEO thrives on fast iteration with strict editorial standards. Use experiment loops to scale without noise.

SEO architecture decisions

- Template coverage vs. depth of fields

- Internal link graph density by template type

- SSR vs. ISR page delivery for crawl budgets

Test surfaces and examples

- Schema changes: FAQ, HowTo, and Product types

- Title and H1 patterns that preserve intent

- Navigation blocks that shift PageRank flow

Building Distribution Loops Around Experiments

Experiments do not end at the result. Ship distribution patterns that reuse the win.

From win to multiplier

- Convert the decision memo into a changelog note

- Syndicate to developer docs and release feeds

- Update sales enablement and onboarding flows

Feedback into backlog

- Add follow up hypotheses that chain mechanisms

- Create refactors for component reuse

- Flag wins that justify broader architectural changes

Execution Playbooks and Roles

Codify who does what and when. Treat roles like interfaces.

Owners and interfaces

- Product operator: sets goals, owns backlog rank

- Growth engineer: implements flags, telemetry, and code

- Analyst: designs metrics, runs analysis plan

- QA lead: validates events and edge cases

- Editor: guards content standards for SEO experiments

Weekly cadence

- Monday: intake and scoping

- Midweek: build, QA, and soft launch

- Friday: review, decision, and registry update

Tooling and a Minimal Data Stack

Keep tools lean. Bias to what the team already uses.

Stack baseline

- Source: app events with typed schemas

- Warehouse: columnar store with cost controls

- Transform: SQL or dbt for derived tables

- Analysis: notebooks with version control

- BI: dashboards for decision meetings

Automation triggers

- CI runs event schema tests on PR

- Orchestrator backfills experiment features nightly

- Slack bot posts guardrail breaches instantly

A Simple Blueprint You Can Ship Next Sprint

Start with one loop. Run it for four cycles. Measure cycle time and win rate.

Setup steps

1) Create an experiment registry table with fields for ID, owner, hypothesis, metrics, MDE, start, stop, decision, and links.

2) Add experiment_id to relevant events and backfill where safe.

3) Ship a feature flag service or use an existing provider.

4) Write a decision memo template with effect size, risk, and next action.

First two experiments

1) Onboarding friction: reduce required fields in the first run. Primary metric: activation within 24 hours. Guardrails: support tickets and error rate.

2) Programmatic SEO template: add entity level internal links. Primary metric: non brand organic clicks per page. Guardrails: time to first byte and crawl errors.

Failure Modes and Rollbacks

Plan for breakage. Fast loops need safe exits.

Common failure modes

- No baseline or MDE defined

- Mixed changes inside a single test

- Sample leakage across variants

- Missing event joins due to ID drift

Rollbacks and fixes

- Kill switch on all flags

- Revert scripts for content or templates

- Data remediation job to rekey events

- Postmortem and pattern update in the playbook

Comparison: Loop Types and When to Use Them

Use this table to select the right loop design.

| Loop Type | Best For | Sample Sizing | Risk Profile | Cycle Time |

|---|---|---|---|---|

| A/B with flags | UI flows and paywalls | Power based | Low | 1 to 3 weeks |

| Quasi experimental | Pricing and packaging | Synthetic controls | Medium | 2 to 6 weeks |

| Sequential tests | SEO templates and copy | SPRT or Bayesian | Low | Continuous |

| Feature toggles with logs | Backend performance | SLO deltas | Low | Days |

Case Pattern: Developer Tool With SEO Architecture Needs

A developer platform wants organic signups and lower activation time.

Loop design

- Primary loop: activation within 24 hours from signup

- Secondary loop: organic clicks per page for programmatic SEO

- Shared guardrails: error rate and latency

Outcomes after one quarter

- Activation rate up 14 percent

- Organic clicks per page up 22 percent

- Cycle time down from 21 days to 10 days

Governance and Ethics in Experimentation

Ship faster without harming users or search ecosystems.

Privacy and consent

- Respect user consent and regional rules

- Do not log sensitive fields into the warehouse

- Anonymize identifiers where possible

SEO integrity

- Avoid thin or manipulative templates

- Validate content quality and usefulness

How Experiment Loops Connect to Execution Playbooks

Loops are the operating system. Playbooks are the installed apps.

Playbook interface

- Inputs: ranked hypotheses and constraints

- Process: steps and acceptance checks

- Outputs: code, content, and decisions

Maintenance cadence

- Quarterly review to retire stale steps

- Add new failure modes from postmortems

How Experiment Loops Amplify Distribution Loops

A win grows faster when distribution is automatic.

Distribution mechanics

- Auto create snippets for social and email

- Embed charts that update live

- Map wins to segments and notify owners

Measurement

- Track reach, engagement, and assisted conversions

- Attribute secondary effects to loop wins

Key Takeaways

- Build experiment loops with codified hypotheses, metrics, and stop rules.

- Automate data capture, QA, and decision logging to reduce cycle time.

- Use experiment loops to scale programmatic SEO with quality.

- Tie wins to distribution loops and execution playbooks for compounding impact.

- Measure success by win rate, cycle time, and reuse of proven patterns.

Close the loop every week. That rhythm compounds learning and growth.