Ship faster by turning every product change into a learnable SEO asset. Use experiment loops to capture intent, test messaging, and scale with automation.

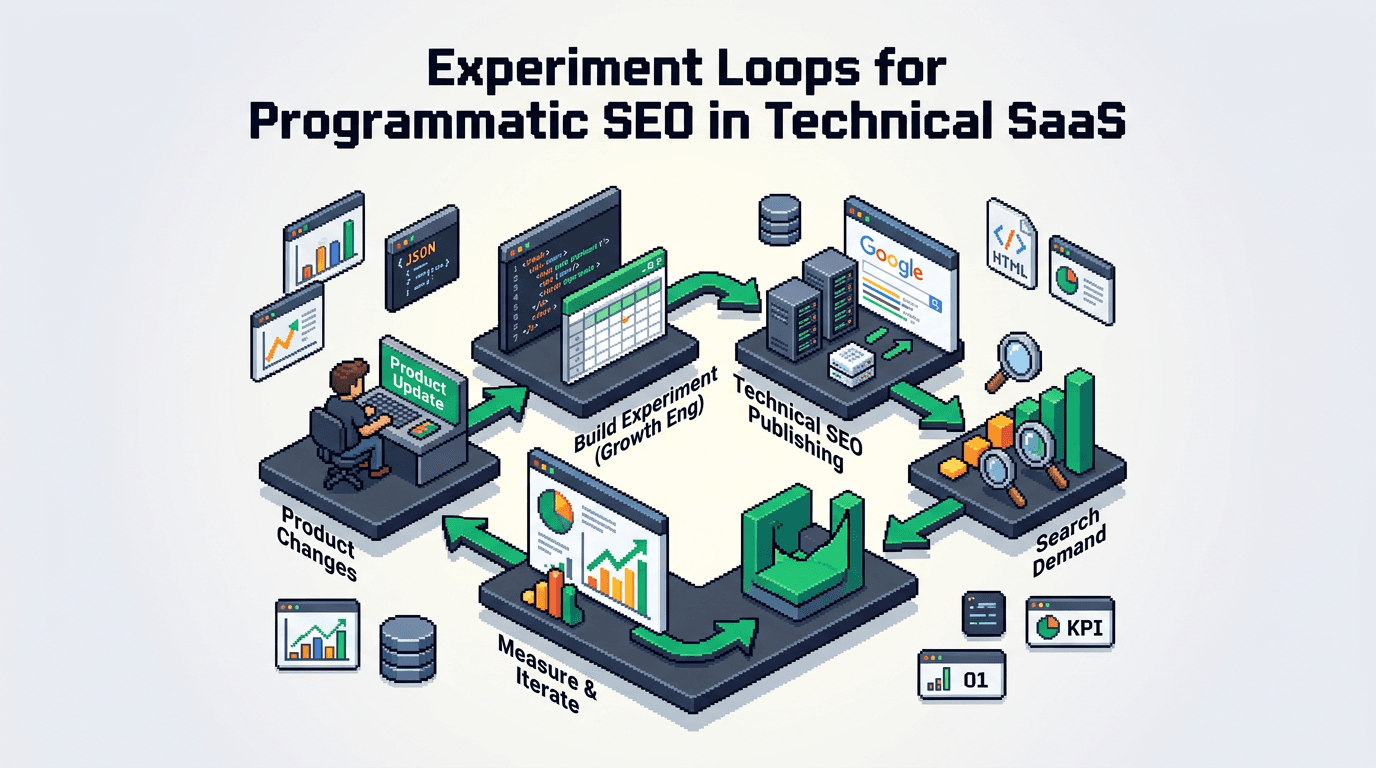

This post shows technical SaaS teams how to build experiment loops that convert product features into search demand. It covers inputs, workflows, and metrics across SEO architecture, programmatic SEO, automation workflows, and distribution loops. Key takeaway: a tight loop from hypothesis to indexed artifact compounds growth while preserving editorial quality.

What Are Experiment Loops and Why They Matter

Experiment loops are short, repeatable cycles that move from hypothesis to shipped artifact to measured outcome. In SEO, the artifact is indexable content mapped to a query and a user job.

Core definition

- Input: a product change, user problem, or feature insight.

- Process: generate hypotheses, create content variants, ship, measure.

- Output: uplift in impressions, clicks, conversions, or learning that informs the next iteration.

Fit for technical SaaS

- Technical products evolve often. Each release is a candidate for search surface.

- Complex queries need precise architecture, not generic blogs.

- Programmatic SEO enables volume without losing specificity.

System Architecture for Programmatic SEO

Programmatic SEO needs a schema, a rendering strategy, and a source of truth. The loop fails if pages drift from product reality.

Canonical entities and templates

- Define entities: features, integrations, use cases, industries, error codes, metrics.

- Map templates to entity types: guide, spec, integration page, how to, troubleshooting.

- Maintain a content schema with required fields per template.

Data pipeline and SSR rendering

- Source data from product docs, changelogs, telemetry, and support tickets.

- Normalize to a headless CMS or config repo.

- Render with SSR React or similar to ensure crawlable HTML and consistent metadata.

Routing and indexation controls

- Deterministic slugs: /integrations/{tool}/, /features/{capability}/, /use-cases/{job}/.

- Robots rules by template state: draft, test, canonical.

- Use rel canonical and sitemap segmentation by entity to guide discovery.

The Experiment Loop Workflow

A practical loop for SEO content around product changes.

1. Hypothesis framing

- Statement: If we target {query cluster} for {feature or job}, we increase qualified trials by X within 30 days.

- Scope: define audience, search intent, and stage.

- Acceptance: CTR +0.5 pts in top 20, or 10 demo requests, or bounce below 55 percent.

2. Query modeling

- Cluster by job to be done, not keyword variations.

- Build primary query, supporting intents, and synonyms from logs and SERP mining.

- Define searcher task completion criteria.

3. Template selection

- Choose from predefined templates bound to entity types.

- Ensure fields cover unique value: benchmarks, APIs, SDKs, code examples.

- Set success metrics per template.

4. Draft generation with automation workflows

- Pull product facts from docs and telemetry.

- Generate first pass with AI using strict schema prompts.

- Insert deterministic elements: API params, version, limits, sample payloads.

5. Editorial and factual checks

- Validate commands, code, and API responses in a sandbox.

- Run linters for style and schema completeness.

- Human review signs off on accuracy and positioning.

6. Ship and index

- SSR build, push to staging, run acceptance tests.

- Release behind experiment flag for partial sitemap inclusion.

- Monitor crawl stats and indexing status.

7. Measure and learn

- Collect leading indicators at 24, 72 hours: log impressions, crawl frequency, render diagnostics.

- Evaluate at 14 and 28 days on clicks, CTR, ranking breadth, and assisted conversions.

- Decide: scale, iterate, or sunset.

SEO Architecture That Supports Fast Iteration

Architecture choices determine loop speed and reliability.

Content schema as contract

- Every template lists required, optional, and computed fields.

- Computed fields include title formulas, meta descriptions, and internal link blocks.

- Version the schema. Breaking changes trigger migrations.

Internal linking graph

- Deterministic rules: parent to child, sibling to sibling by facet.

- Generate link blocks at build time with caps per page.

- Maintain hub pages that summarize clusters and funnel depth.

Quality gates

- Do not ship pages without product proof elements.

- Set minimum word count only for coverage, not padding.

- Require at least two unique user tasks solved with verifiable steps.

Automation Lanes for Content Throughput

Automation lanes remove manual bottlenecks while preserving standards.

Lane 1: Intake and triage

- Intake sources: release notes, roadmap, support tags, API telemetry.

- Classify to entity type and priority using rules and LLM classifiers.

- Route to owners in growth engineering and product marketing.

Lane 2: Drafting and enrichment

- Generate structured drafts from prompts with product facts.

- Enrich with code snippets, diagrams, and parameter tables.

- Attach acceptance tests for examples.

Lane 3: Review and compliance

- Run checklists for claims, security, and legal text.

- Verify third party trademarks and integration accuracy.

- Approve for publish with signed commit.

Lane 4: Publish and monitor

- Automated deploy on merge.

- Notify analytics, search console, and logs.

- Open an iteration ticket with baseline metrics.

Distribution Loops That Compound Results

Publishing is the start. Distribution closes the loop and accelerates learning.

Owned channels

- Docs and in app guides: embed short versions and link to canonical.

- Changelog cross links to features and tutorials.

- Newsletter and release notes summarize new clusters.

Partner and community channels

- Integration partners co publish guides with deep links.

- Community Q and A threads seeded with canonical steps.

- Developer forums reference code blocks and repos.

Social and syndication

- Post concise how to threads with code and benchmarks.

- Repurpose as short videos that hit the same query intent.

- Syndicate to platforms that allow canonical tags.

Metrics, Dashboards, and Review Cadence

Measure outcomes and learning velocity. Prefer rate metrics over snapshots.

Leading indicators

- Crawl requests per page in first 7 days.

- Indexation time, soft 404 rates, and render errors.

- Internal link discovery depth.

Lagging indicators

- Impressions by cluster and template.

- CTR deltas vs control pages.

- Assisted signups, demos, or docs success events.

Cadence and governance

- Weekly standup: ship count, blockers, acceptance failures.

- Biweekly review: promote winners, kill laggards, refine templates.

- Quarterly refactor: schema evolution, routing, and deprecations.

Failure Modes and Safeguards

Expect failure. Contain blast radius and log learnings.

Common failure modes

- Thin pages from poor entity mapping.

- Duplicate intent across templates leading to cannibalization.

- Inaccurate code or outdated API details.

Safeguards

- Pre publish similarity checks to avoid overlapping intents.

- Integration tests that call sample endpoints in CI.

- Auto sunsetting rules: de index if no traction at 60 days and below quality thresholds.

Minimal Blueprint to Launch in 30 Days

A compact plan to stand up experiment loops for programmatic SEO.

Week 1: Foundations

- Define entities and pick 2 templates.

- Wire a headless CMS or config repo.

- Set SSR build with metadata contracts and sitemap segmentation.

Week 2: Inputs and prompts

- Pipe changelog and docs into the intake lane.

- Author LLM prompts aligned to schema fields.

- Build acceptance checks for facts and code.

Week 3: Ship first cluster

- Model a 20 page cluster around a feature and two integrations.

- Publish 5 pages behind test flags.

- Measure crawl and index speed.

Week 4: Scale and review

- Promote winners to full index.

- Iterate on titles, intros, and internal links.

- Open the next cluster based on learnings.

Tooling Comparison for Technical Teams

Below is a quick comparison of common tooling used to run experiment loops at scale.

Here is a concise table comparing core options by fit and control level.

| Category | Option | Strengths | Risks | Best for |

|---|---|---|---|---|

| CMS | Headless CMS | Versioned content, workflows | Vendor limits, cost | Teams with editors and approval flows |

| Repo | Markdown in repo | Full control, diffable PRs | Non marketers blocked on Git | Eng led orgs with strong CI |

| Rendering | SSR React | Crawlable, dynamic data | Build complexity | Apps with shared design system |

| Analytics | Product analytics | Conversion attribution | SEO session stitching | PLG funnels |

| SEO | Search console | Query level feedback | Limited attribution | Early loop validation |

Execution Playbooks and Ownership

Clarify owners to prevent drift and delays.

Roles and responsibilities

- Growth engineering: schema, pipelines, SSR, tests.

- Product marketing: positioning, messaging, examples.

- Dev relations: code samples, repos, community loops.

Acceptance checks

- Schema complete and validated.

- Two unique user tasks demonstrated with steps.

- No overlapping intent with existing pages.

- Indexing readiness confirmed by pre render tests.

Case Pattern You Can Replicate

Abstract a reusable approach that maps product surfaces to search surfaces.

Inputs

- Feature: role based access control.

- Integrations: Okta, Auth0.

- Jobs: secure access, audit compliance, SSO setup.

Process

- Build templates for integration setup, compliance guides, and troubleshooting.

- Generate drafts with code blocks, CLI commands, and API payloads.

- Publish in stages, link between feature, integration, and troubleshooting pages.

Outputs

- Queries captured for SSO setup with vendor names.

- Higher CTR from exact match task language.

- Assisted conversions for enterprise leads.

Key Takeaways

- Use experiment loops to turn product changes into search ready artifacts fast.

- Anchor programmatic SEO in a strict schema, SSR rendering, and internal link rules.

- Run automation lanes to cut manual work without lowering standards.

- Close the loop with distribution and rate based metrics to learn faster.

- Review weekly and kill low performers to protect quality and budget.

A tight, measured loop beats ad hoc publishing. Build the system once and let it compound.