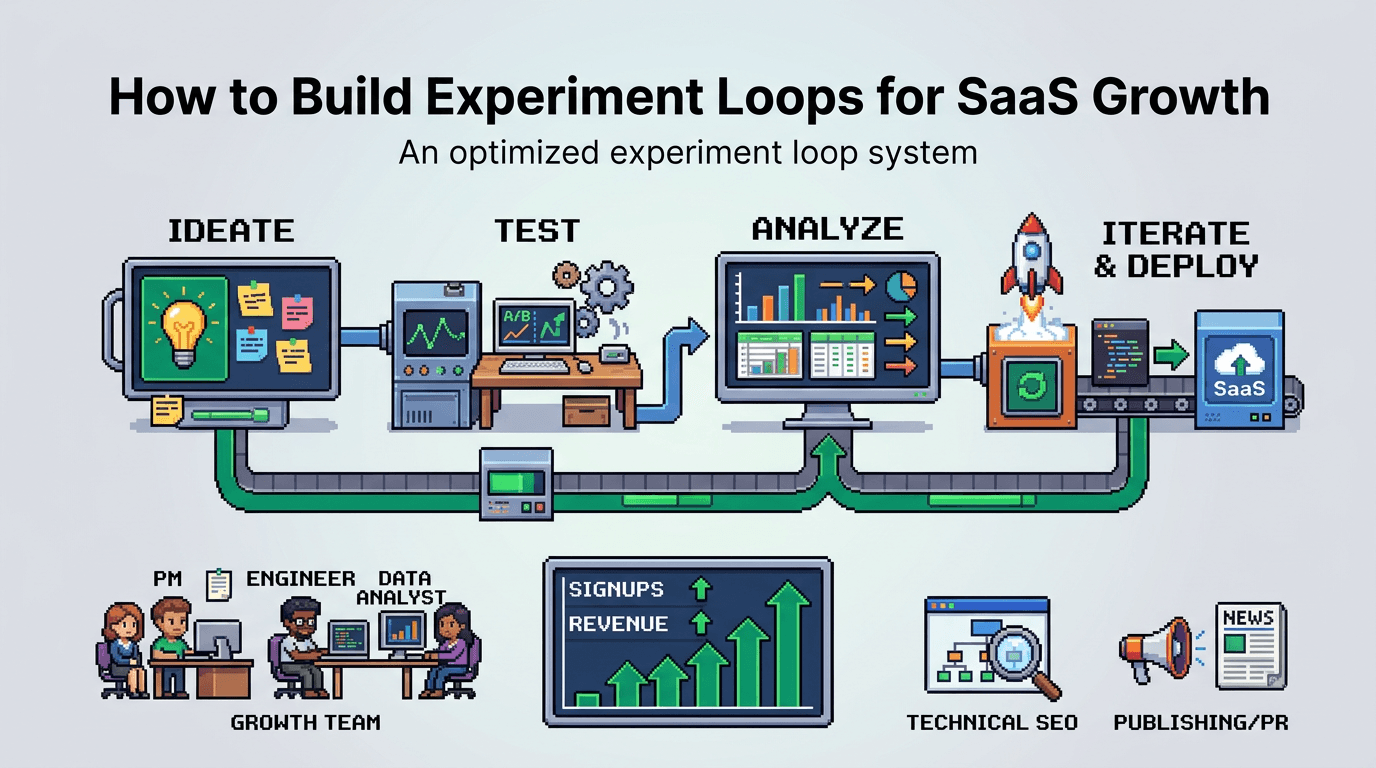

High growth teams do not guess. They run tight experiment loops that turn unknowns into compounding wins.

This guide shows product operators and technical growth practitioners how to design, run, and scale experiment loops. You will learn a practical system with automation workflows, programmatic SEO examples, and distribution loops. The key takeaway: build a repeatable loop with clear inputs, fast analysis, and shipping discipline.

What Are Experiment Loops?

Experiment loops are closed systems that turn hypotheses into production changes on a fixed cadence. They reduce opinion work and expose constraints early.

Core components

- Inputs: problems, ideas, and data sources that seed hypotheses.

- Process: prioritization, design, implementation, and measurement steps.

- Outputs: accepted changes, rollbacks, and learnings added to playbooks.

- Cadence: a weekly or biweekly rhythm with clear gates.

Why loops beat one off tests

- Speed: a fixed pipeline avoids re inventing process each time.

- Signal quality: standard metrics and data checks reduce noise.

- Compounding: learnings roll into templates and playbooks.

- Focus: WIP limits prevent scatter and context switching.

Choose the Primary Metric and Guardrails

A loop needs one north star for a given scope and guardrails that protect the business while you explore.

Select a north star

- Product led growth: activation rate or PQL to SQL rate.

- SEO system: non brand organic signups or qualified search traffic.

- Monetization: trial to paid conversion or expansion revenue.

Acceptance check: metric defines success for a single release window. Everyone can compute it from shared data.

Add guardrail metrics

- Reliability: error rate, page speed, uptime.

- Quality: bounce rate on programmatic pages, support tickets per 1k sessions.

- Finance: CAC payback trend, promo usage abuse.

Failure mode: teams skip guardrails and ship wins that hurt retention. Rollback if any guardrail breaches a threshold for two cycles.

Design the Experiment Loop Pipeline

Turn the loop into a visible pipeline with owners, gates, and SLAs.

Stages and owners

- Intake: PM or Growth Lead triages. SLA 24 hours.

- Prioritize: cross functional review selects 1 to 3 items. SLA 24 hours.

- Design: analyst and engineer write brief and success checks. SLA 48 hours.

- Build: engineer ships behind a flag. SLA 3 to 5 days.

- Measure: analyst sets dashboards and checks data quality. SLA 24 hours post launch.

- Decide: accept, iterate, or rollback. SLA 24 hours.

Gates and artifacts

- Hypothesis brief: problem, solution, metric, segment, estimate, risk.

- Experiment spec: events, variants, rollout plan, sample stop rule.

- Dashboard link: core metric, guardrails, segment cuts.

- Postmortem: result, cause analysis, next action, playbook diff.

Automation Workflows That Cut Cycle Time

Automate the busywork so the loop moves fast without dropping quality.

Template and checklist automation

- PR templates: include hypothesis ID, metrics, and flags.

- Jira or Linear templates: auto generate tasks from a form.

- QA checklists: lighthouse, schema, tracking, and accessibility.

Minimal blueprint:

1) Store templates in a repo. 2) Use issue forms to spawn tasks. 3) Add Git hooks to enforce fields. 4) Block merges if checks fail.

Data and reporting automation

- Event validation: schema checks in CI for analytics payloads.

- Daily jobs: refresh experiment tables and anomaly alerts.

- Auto snapshots: capture pre post metrics and attach to tickets.

Example SQL skeleton:

-- Daily metric snapshot

insert into metrics_daily (exp_id, date, users, conv_rate)

select exp_id, current_date, count(distinct user_id), avg(converted)

from exp_fact

where exp_id = :id and event_date >= current_date - interval '1 day'

group by exp_id;Programmatic SEO Use Case

Apply the loop to programmatic SEO to scale qualified traffic without quality loss.

Inputs and data model

- Source data: product catalog, integrations, templates.

- Entities: feature, industry, use case, location.

- Mapping: each template resolves one entity or a combination.

Assumption: SSR templates exist and ship with clean internal linking. See guidance from Google on SEO basics at https://developers.google.com/search/docs/fundamentals/seo-starter-guide.

Hypotheses and tests

- Title pattern variants: {Entity} software vs {Alt}. Goal: CTR lift.

- Module order: FAQs above fold vs below. Goal: bounce rate drop.

- Schema markup: FAQPage and Product for eligible templates. Goal: SERP enhancements.

Acceptance checks: no drop in pages per session. Indexation rate stable or rising.

Technical SEO for Product Teams

Engineering teams need clear boundaries to move fast without risk.

SSR and template gates

- Critical rendering path: target LCP under 2.5s on 75th percentile mobile.

- Metadata: unique title, description, canonical, and hreflang if multi locale.

- Links: no orphaned programmatic pages. Breadcrumbs present.

Use official Lighthouse guidance at https://web.dev/measure/ to validate performance.

Content quality controls

- Thin page filter: block publish if word count under a threshold plus missing modules.

- Duplication check: fuzzy match against existing templates.

- QA gate: human review for 5 percent sample each release.

Rollback plan: set noindex on impacted slugs and remove from sitemaps until fixed.

Prioritization Framework That Fits Loops

Use a lightweight, numeric model to select work that drives learning or revenue fast.

Score model

- Impact: projected change on the north star. 1 to 5.

- Confidence: evidence strength. 1 to 5.

- Effort: engineering and content days. 1 to 5.

- Risk: blast radius if wrong. 1 to 5.

Score = (Impact * Confidence) / (Effort + Risk)

WIP limits and cadence

- Run 1 to 3 concurrent experiments per team.

- Ship decisions every 7 or 14 days.

- Keep a cooldown day for documentation and refactoring.

Measurement, Stats, and Stop Rules

Pick a simple method and apply it consistently across loops.

Practical stats approach

- For funnel metrics: use difference in proportions with pooled standard error.

- For continuous metrics: use Welch t test when variance differs.

- For small samples: prefer Bayesian credible intervals for clarity of effect.

Always predefine the minimal detectable effect and sample stop rule. Avoid peeking every hour.

Segments and diagnostics

- Segment by traffic source, device, and user stage.

- Plot cumulative conversion to detect novelty effects.

- Use holdback groups for long tail impacts.

Failure mode: declaring wins from biased segments. Guard with pre registered segments and a global decision rule.

Distribution Loops for Learnings and Reach

Ship learnings across channels to amplify impact and collect more signal.

Turn results into assets

- Publish an internal note with the dashboard and decision.

- Create a short external post that explains what changed and why.

- Generate snippets for email, LinkedIn, and in app changelogs.

Schedule and feedback

- Batch content weekly. Use a scheduler to post during peak hours.

- Track URL UTM performance and feed back into the backlog.

- Add common questions to support macros and docs.

Execution Playbooks and Repo Hygiene

Codify repeatable work so new operators can run the loop without heavy handoff.

Playbook structure

- Goal and scope: what the playbook is for.

- Inputs: data sets, templates, repos, dashboards.

- Steps: numbered actions with owners and SLAs.

- Acceptance checks: metrics and QA criteria.

- Rollback: how to revert safely.

Repo structure example

- /playbooks/experiment loop.md

- /checklists/seo template qa.md

- /sql/metrics snapshots.sql

- /automation/ci analytics schema.yml

- /dashboards/activation.json

Example Weekly Loop Timeline

A simple timeline helps teams forecast effort and plan reviews.

Seven day rhythm

- Day 1: intake and prioritize 1 to 3 items.

- Day 2: write briefs and specs. Confirm events.

- Day 3 to 4: build behind flags. Create dashboards.

- Day 5: launch to 10 to 20 percent. Monitor guardrails.

- Day 6: analyze results and segment cuts.

- Day 7: decide, document, distribute, and queue next.

Cadence table

Below is a compact view of roles and outputs per day.

| Day | Owner | Output |

|---|---|---|

| 1 | PM, Growth Lead | Ranked backlog, selected items |

| 2 | Analyst, Engineer | Brief, spec, data checks |

| 3 4 | Engineer | Flagged build, QA passed |

| 5 | Analyst | Live metrics and guardrails |

| 6 | Analyst | Result summary and segments |

| 7 | Team | Decision, doc, distribution |

Tooling Options Compared

Pick tools that integrate with your codebase and data warehouse.

Here is a brief comparison of common categories.

| Category | Option examples | Strengths | Constraints |

|---|---|---|---|

| Issue tracking | Linear, Jira | Templates, workflows, automations | Admin overhead if over customized |

| Experimentation | LaunchDarkly, GrowthBook | Flags, targeting, governance | Requires clean event schema |

| Analytics | Snowflake, BigQuery, PostHog | Warehouse first, event capture | Cost and data modeling effort |

| Dashboards | Looker, Metabase, Mode | Versioned queries, alerts | Query governance and sprawl |

| SEO tools | GSC, Screaming Frog, Ahrefs | Crawl, keywords, coverage | Sampling and data alignment |

Governance, Ethics, and Risk Controls

Loops must protect users and brand.

Privacy and compliance

- Minimize PII in experiments. Use hashed IDs.

- Honor consent flags in analytics and targeting.

- Document data retention for experiment logs.

Risk review

- Add a red team review for experiments that change pricing or permissions.

- Require legal review for SEO content that compares competitors.

- Keep an incident log for rollbacks and customer impact.

Review Cadence and Continuous Improvement

A loop improves only if you inspect it.

Weekly and monthly reviews

- Weekly: pipeline health, SLA breaches, blocked items.

- Monthly: win rate, median effect size, time to decision.

- Quarterly: playbook diffs, new templates, deprecations.

Metrics for the loop itself

- Lead time: intake to decision.

- Throughput: experiments decided per week.

- Win rate: accepted changes per decided experiments.

- Rework rate: iterations required before acceptance.

Key Takeaways

- Start with one north star metric and guardrails.

- Build a visible pipeline with owners, gates, and SLAs.

- Automate templates, data checks, and reporting.

- Apply the loop to programmatic SEO with strict QA.

- Document wins and ship them through distribution loops.

Close the loop every week. Small, fast decisions compound into durable growth.