You can ship growth faster when experiments run on a fixed cadence with clear gates and automated checks. The right loop removes guesswork and compounds wins.

This guide shows technical product teams how to design, run, and scale experiment loops for growth SEO. It is for operators and developers who own programmatic SEO, distribution, and analytics. The key takeaway: define a tight loop with inputs, workflow, QA gates, and outcomes, then automate the repeatable parts.

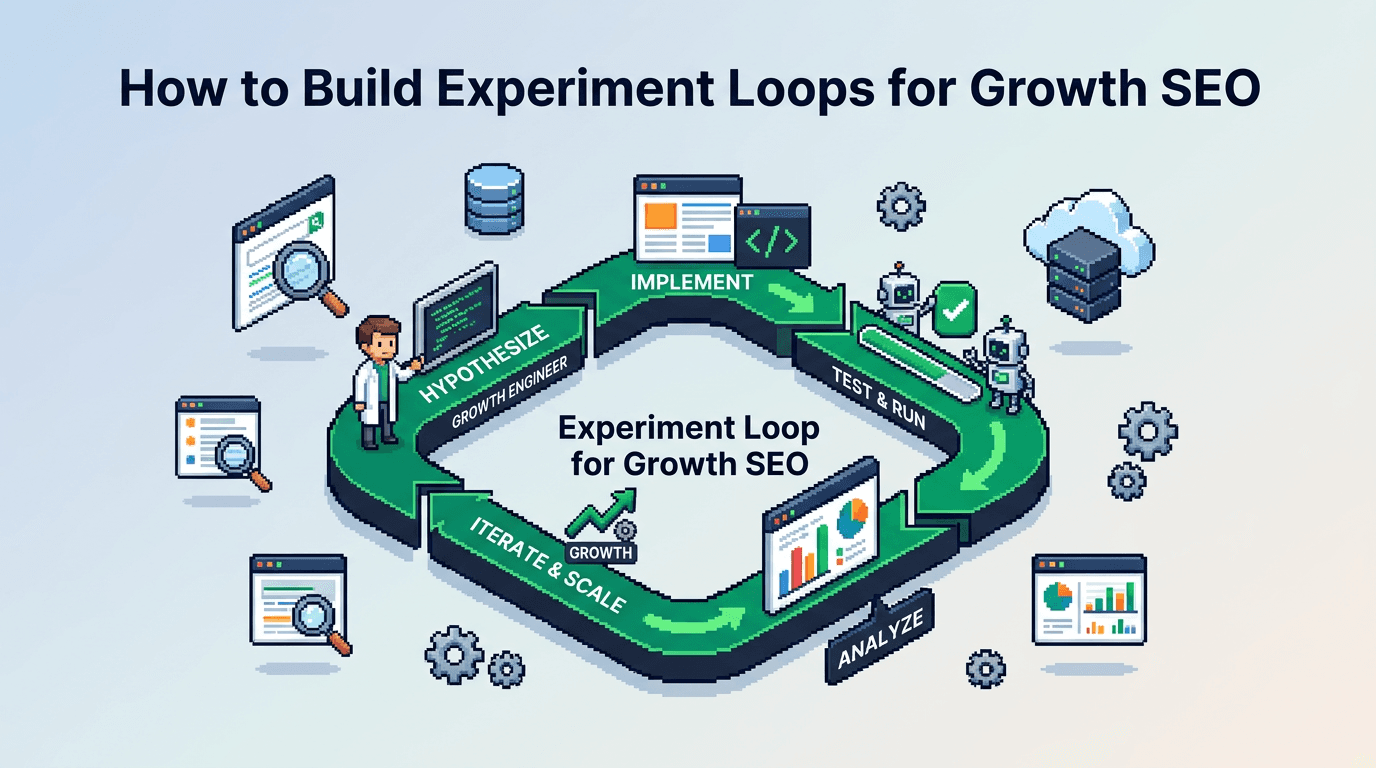

What Are Experiment Loops in Growth SEO?

Experiment loops are repeatable workflows that move from idea to shipped change to measured impact. They close with a decision and a next action.

Core components

- Inputs: hypotheses, opportunities, and backlogs.

- Process: build, QA, ship, measure.

- Outputs: decision, documentation, and next test.

Why loops beat ad hoc tests

- Consistency: results are comparable across cycles.

- Speed: automation reduces handoffs.

- Compounding: insights stack and inform programmatic SEO decisions.

Principles That Make Experiment Loops Work

Build loops that are simple, observable, and hard to skip.

Make decisions binary

Define a clear accept or reject rule before you start. Avoid midpoint debates. Use thresholds tied to business metrics.

Standardize inputs and outputs

Use a template for hypotheses and a template for results. Store both in one repo or doc system.

Automate the checks

Automate linting for SEO metadata, schema, links, performance, and crawlability. Manual checks only for edge cases.

A Minimal Experiment Loop You Can Ship This Week

Here is a baseline loop that fits most technical SEO and content tests.

Roles and owners

- PM or growth lead: prioritizes the queue.

- Developer: implements changes.

- Analyst: defines metrics and reads results.

- Editor or SEO: reviews content and on page.

Tools and surfaces

- Issue tracker: Jira or Linear.

- Repo: GitHub with CI.

- Data: BigQuery or Snowflake, plus Looker or Metabase.

- SEO: Screaming Frog, PageSpeed CLI, and a crawler API.

Workflow steps

1) Define hypothesis in the issue using the template below.

2) Size effort and expected impact. Add ROI tag.

3) Implement in a short lived branch.

4) Run CI: tests, SEO lints, and Lighthouse.

5) Ship to 10 to 30 percent of traffic if feasible.

6) Collect metrics for the defined window.

7) Decide with pre written criteria. Log outcome.

8) Roll forward or roll back. Queue the next test.

Acceptance criteria

- CI passes on all gates.

- Exposure threshold reached.

- Result meets the minimum detectable effect or confidence bound.

Templates You Can Copy

Use these lightweight templates to reduce friction.

Hypothesis template

- Goal: increase non brand organic clicks for template X.

- Hypothesis: changing H2 structure to include entity Y will improve CTR and dwell time.

- Metric: primary CTR from GSC, secondary session duration from analytics.

- MDE window: 14 days, minimum 3k impressions.

- Variant: new H2 and intro copy with entity Y.

- Control: current template.

- Guardrails: no drop in LCP over 100 ms, no schema errors.

- Decision rule: ship if CTR delta is at least 0.7 percentage points with stable bounce.

Result template

- Outcome: accept or reject.

- Impact: +0.9 pp CTR, neutral session duration.

- Evidence: GSC query filter, screenshot link, dashboard link.

- Roll action: promote to 100 percent or revert commit.

- Next: propose entity Y2 exploration for long tail pages.

Instrumentation for Technical SEO Experiments

You need clean measurement to trust the loop.

Data model for page templates

- Dimensions: template_id, entity_id, route, device, country.

- Metrics: impressions, clicks, ctr, sessions, conv_rate, lcp, cls, index_status.

- Time grain: daily.

Event capture and joins

- Pull GSC query and page data daily into warehouse.

- Join analytics sessions by canonical URL and date.

- Store performance metrics from Lighthouse CI per commit.

Automation Workflows That Cut Manual Steps

Automate the repetitive gates to increase velocity and quality.

Pre commit checks

- SEO lint: title length, meta description presence, canonical, robots, hreflang, schema.

- Link checker: internal link targets exist and return 200.

- Content lints: banned patterns, thin content thresholds.

CI pipeline

- Build SSR bundle and run integration tests.

- Run Lighthouse CI for LCP, TBT, CLS thresholds.

- Crawl changed routes with headless crawler.

- Post a pass or fail status back to the PR.

Programmatic SEO and Template Level Tests

Programmatic SEO multiplies small wins across many pages. Test at the template level.

What to test on programmatic pages

- Title and H2 patterns with entity variables.

- Intro paragraph that sets user intent.

- Internal link blocks that surface sibling entities.

- Schema variations for FAQ, HowTo, or Product when relevant.

Guardrails for scale

- Enforce QA gates at build time.

- Cap daily publish volume during rollout.

- Monitor index coverage and crawl rate for anomalies.

Distribution Loops After Shipping a Win

Distribution compounds the impact of each accepted experiment.

Turn results into reusable assets

- Write a short changelog entry with outcome and snippet.

- Generate snippets for social, email, and community posts.

- Update the internal playbook with the new rule and example.

Light automation for distribution

- Use a scheduler to post 3 variant snippets across 14 days.

- Reply with context and a link to the result dashboard.

Example: Improving CTR on Entity Pages in SSR React

This example shows the loop applied to a real pattern.

Context and goal

- Context: SSR React site with programmatic entity pages.

- Goal: increase CTR on top 200 entity pages within 30 days.

Setup and test

- Change title and H2 to include the entity plus benefit.

- Add an intro sentence that confirms intent in 20 words.

- Add an internal link module to the next step resource.

Metrics and decision

- Primary: CTR delta vs control after 14 days.

- Guardrails: LCP under 2.5 s, no schema errors, no index drops.

- Decision: promote if CTR gain exceeds 0.7 pp with stable bounce.

Comparison of Experiment Surfaces

Use this table to choose where to run the next test.

| Surface | Typical win size | Time to implement | Risk level | Best for |

|---|---|---|---|---|

| Title pattern | Small to medium | Hours | Low | Quick CTR lifts |

| H2 structure | Small to medium | Hours | Low | Intent clarity |

| Intro copy | Medium | Hours | Low | Engagement |

| Internal links | Medium | Day | Medium | Crawl and depth |

| Schema | Medium | Day | Medium | Rich results |

| Template layout | Large | Days | High | Comprehensive gains |

Execution Playbooks and Review Cadence

The loop is a playbook. Keep it visible and current.

Weekly cadence

- Monday: triage backlog and select two tests.

- Midweek: implement and ship to partial traffic.

- Friday: review dashboards and record provisional outcomes.

Monthly review

- Aggregate results by surface and template.

- Refresh decision rules and thresholds.

- Retire surfaces with low signal.

Failure Modes and Rollbacks

Plan for what breaks. Make rollbacks cheap.

Common failure modes

- No measurable effect due to low volume.

- Confounded by seasonality or campaign noise.

- Performance regressions from added components.

Rollback checklist

- Revert commit and purge cache.

- Remove from experiment registry.

- Annotate dashboards with the rollback timestamp.

Example Queries and Checks

Use these queries and commands to operationalize the loop.

GSC CTR comparison

- Pull daily impressions and clicks by page for control and variant.

- Calculate CTR and delta. Apply a simple two sample comparison for direction.

Lighthouse CI thresholds

- Set LCP budget at 2.5 s p75 desktop and mobile.

- Fail the PR if thresholds are exceeded on changed routes.

How to Prioritize the Backlog

Not all ideas deserve a slot. Rank by impact and cost.

Scoring model

- Impact: expected clicks or conversions if it wins.

- Confidence: evidence from prior tests or benchmarks.

- Effort: estimated hours to implement and QA.

Practical rule of thumb

Pick two high confidence small bets and one medium bet per week.

Governance and Documentation

Documentation prevents repeat mistakes and speeds onboarding.

Single source of truth

- Keep hypothesis and result templates in one repo.

- Link PRs, dashboards, and final decisions.

Access and reviews

- Everyone can read. Owners can edit.

- Monthly audit to remove stale artifacts.

Key Takeaways

- Define a simple experiment loop with clear gates and automation.

- Test at the template level to scale programmatic SEO impact.

- Enforce guardrails for performance, schema, and index health.

- Document outcomes and turn wins into distribution assets.

- Review weekly and monthly to refine priorities and rules.

Close the loop every time. Ship, measure, decide, and move to the next test.